Some see machine learning (ML) as an analytical ‘easy button’ — after all, who doesn’t want a powerful tool engineered to uncover data value and empower business leaders to make better informed decisions? While ML can deliver critical, business-enabling insights, the road to achieving these results is rarely easy or straightforward. In order to leverage ML effectively for business purposes, organizations must understand and be prepared to mitigate the risks these capabilities can introduce. The increasingly visible category known as Privacy Enhancing Technologies (PETs) is answering the challenge by allowing ML capabilities to be used in a manner that protects the sensitive interests of business users.

Before we explore the unique capabilities PETs bring to the table, let’s specify what we mean by ML. While this may seem fundamental and understood at this juncture, experience tells us that cross-functional teams at large organizations are rarely working from a standard definition. Those contributing directly in the data science space clearly have a vision, but often the reality is that the impact of ML is felt — and increasingly driven by — stakeholders outside an organization’s core data science functions. Hence, a base understanding will ensure we have a solid foundation to build upon.

By its most basic definition, ML refers to a set of algorithmic techniques used to build and utilize ‘smart’ constructs (models) in order to extract insights from data. A practical application of Artificial Intelligence, ML enables users to program a computer to do such a task, not through explicit instructions, but by having the computer analyze a large body of data to figure out the instructions for itself through a process called “training.” The model training process involves allowing the algorithm to find patterns in a set of training data that map the input data attributes to targets, and results in an ML model that captures these patterns.

Since a good deal of effort goes into effective model creation, ML models should be thought of as intellectual property and valuable business assets. For organizations operating in regulated or otherwise sensitive spaces, models must also be considered from a privacy and security standpoint. In many business applications, effective ML models are trained on sensitive data covered by privacy regulations, and any vulnerability of the model itself is a direct potential liability. This is especially true when such ML models are leveraged against distributed intra- and inter-organizational as well as third-party data sources.

These challenges have led to a category of ML solutions, often called Privacy-Preserving Machine Learning, that leverage PETs, an increasingly recognizable family of technologies that enhance and preserve the privacy of data throughout its lifecycle. From a business perspective, PETs provide an innovative path to extracting critical insights and driving collaboration efforts, including via machine learning, while preserving both IP and necessary data sensitivity requirements and compliance standards. This allows the focus to remain on the business benefits of the results derived rather than the risks inherent in the ML model itself and its surrounding activity.

PETs are contributing to the broader ML landscape in two substantive ways: by protecting model evaluation and model training.

By ensuring that ML models, and their associated results, remain encrypted throughout the entire evaluation lifecycle, PETs allow analysts to securely derive insights from data sources across security domains and organizational boundaries, even when using highly sensitive models and evaluating against untrusted sources. Models can be evaluated across organizations and jurisdictions without exposing the model itself or the underlying data. This protects both model owners and the data owners, who are able to retain positive and auditable control of their data assets. This mutually protected relationship is essential for any cross-organizational use case.

PETs also allow models to be trained in an encrypted capacity while ensuring the model development process, the model itself, and the interests of all parties involved remain protected. This can enable encrypted federated learning and secure data usage across disparate, decentralized datasets. Such capabilities allow organizations to leverage ML to securely derive insights from data sources that they don’t own or control, even when using sensitive data.

These PETs-powered ML applications have far-reaching implications across verticals especially relative to the utilization of publicly available, open-source, or third-party data sources, which are increasingly important in today’s data-rich environment. PETs can expand data utility for ML uses by eliminating risk factors that frequently prove to be gating functions. Further, PETs can drive a strategic data advantage for organizations and their partners by ensuring business-critical indicators are both protected and prioritized.

With the push to capitalize on the value of ML, it is critical organizations understand the risks that are inherent in the space and the protections available. The ability to harness these capabilities while protecting business interests is key to the impact and longevity of the ML usages. PETs ensure ML models can be leveraged in a manner that delivers business-enabling insights without compromising IP or sensitive/regulated data in the process, unlocking data value for the next frontier of secure data usage.

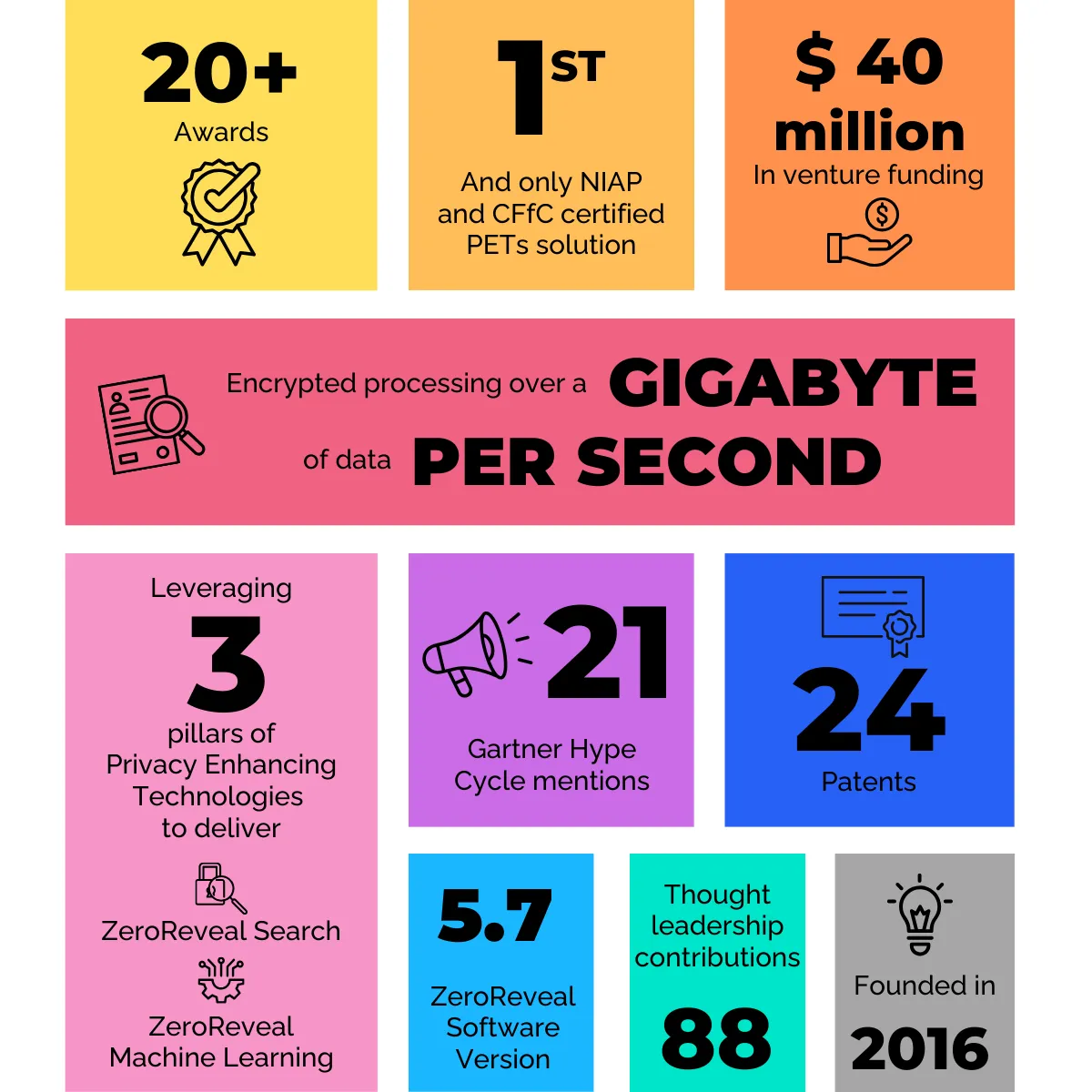

About the Author: Dr. Ellison Anne Williams is the Founder and CEO of Enveil. Building on more than a decade of experience leading avant-garde efforts in the areas of large-scale analytics, information security, computer network exploitation, and network modeling, Ellison Anne founded the Privacy Enhancing Technology startup in 2016 to protect sensitive data while it's being used or processed.

[Also published at Business Reporter]